Why real-time robot control is the key to unlocking Physical AI

The AI models reshaping our world are hitting a wall. Not in silicon, but in steel. The missing link between intelligence and industry is not smarter models. It is real-time control.

We are living through an extraordinary moment in artificial intelligence. Frontier models can reason, plan, and perceive the world with increasing sophistication. The field of “physical AI” is advancing at a breathtaking pace. Yet if you walk onto a real factory floor today, you will find robots that are largely blind to their environment, executing preprogrammed paths, stopping the moment anything unexpected happens. The gap between AI research and industrial reality is not a data problem or a model problem. It is a control problem.

Not faster. Fundamentally different.

Here is the framing that most people in physical AI get wrong: they treat real-time control as a performance improvement. A speed upgrade. The same thing, done faster. It is not. Real-time control is a categorical capability unlock. A system that reacts at servo frequency and a system that does not are not doing the same job at different speeds. They are doing fundamentally different jobs.

Consider the analogy of human vision. A person who sees a ball thrown at them and can catch it is not simply a “faster” version of someone reviewing a photograph of the throw after the fact. The real-time perception closes a loop that makes an entirely new class of behavior possible. Remove the loop and the capability disappears entirely, regardless of how good the underlying model is.

The same principle governs robotic manipulation. A robot that receives a corrected pose command every 8 milliseconds is not a faster version of a robot that receives a trajectory once and executes it blindly. It is a qualitatively different machine. It can adapt. It can recover. It can operate in a world that does not perfectly match the plan. The robot without the loop cannot do these things at all, no matter how much compute you throw at the offline planning stage.

Real-time physical AI is not about running inference faster. It is about closing the loop between perception and action at the robot’s native control frequency. Close the loop and new capabilities become possible. Leave it open and they remain permanently out of reach, regardless of model quality.

Rudy Cohen

Co-founder & CEO, Inbolt

Why real-time control unleashes three capabilities

Think about what makes manipulation hard. A human picking up a bolt does not just plan a trajectory and execute it blindly. They continuously adjust grip, compensate for a slippery surface, react to a part that has shifted a millimeter. Our hands constantly adjust based on what our eyes see, so naturally we barely notice it. For robots, it has been an unsolved engineering challenge for decades. And the reason is precisely that the loop was never closed. Three capabilities define truly intelligent robotic manipulation. All three are impossible without a closed real-time loop.

Dexterous manipulation.

Grasping, inserting, assembling: these tasks are governed by contact dynamics that evolve in milliseconds. A model that plans a motion and sends it as a single command cannot adapt to micro-variations in part geometry, surface friction, or positional tolerance. These variations are not edge cases. They are the norm in real industrial environments.

Consider a robot inserting a body panel clip on an automotive line. The clip tolerance is under a millimeter. The part arrives from a moving conveyor with slight positional variation every single cycle. A model that plans once and dispatches a fixed command either hits the nominal pose or misses, there is no in-between. With real-time correction streaming at servo frequency, the robot adjusts continuously during approach, compensating for each variant as it arrives. Without it, the task requires either perfect upstream fixturing or constant human intervention. Neither is acceptable at scale.

Reaction to unplanned events.

In the real world, a part falls, a conveyor slips, a human steps into the workspace. Physical AI must detect and respond to these events continuously. The window for safe and effective reaction is measured in tens of milliseconds. A system that perceives the world episodically and re-plans offline is not a slow version of a reactive system. It is a different system entirely, one that by design cannot respond to events it has not anticipated.

Robustness to dynamic situations.

Modern manufacturing is not the repetitive assembly-line work of the 1970s. Batch sizes shrink, product variants multiply, logistics flows change shift by shift. An intelligent robot must continuously update its behavior based on live visual and sensory data. This is only possible if the model is running continuously inside the control loop, not issuing instructions from outside it.

How real-time control actually works

In practice, real-time robot guidance means streaming joint positions or Cartesian poses directly to the robot controller at high frequency, synchronizing the AI model’s output with the robot’s own servo loop. Rather than computing a complete trajectory and dispatching it, the model becomes a continuous, reactive source of setpoints.

This sounds simple. It is not.

125

125 Hz

Typical servo frequency

<8

<8 ms

Max latency per budget cycle

∞

∞ +

Ways a robot can fault

Each robot brand exposes a different real-time interface, with different protocols, different control frequencies, and different safety models. Jerk limits, velocity saturation, and fault-recovery behaviors vary dramatically across manufacturers. A control pipeline built for one robot will fail on another.

Beyond the hardware heterogeneity, the software challenge is severe. The model must produce smooth, physically consistent command streams under strict latency constraints. A single dropped frame or command discontinuity can trigger an emergency stop, interrupt production, and require manual restart. In an industrial environment, that is not a bug. It is a business-critical failure.

The industry’s blind spot: proven hardware left behind

Here is the uncomfortable truth about the current wave of physical AI demonstrations: almost none of them run on the robots that actually dominate global manufacturing.

FANUC alone has over 900,000 robots installed worldwide. FANUC, KUKA, and Yaskawa together represent the overwhelming majority of industrial robot deployments in automotive, aerospace, electronics, and heavy industry. These are not legacy machines awaiting replacement. They are the backbone of global production, chosen for proven reliability, tight tolerances, and decades of field validation.

Yet the physical AI systems being demonstrated in labs and at conferences almost universally run on new robot brands, excellent for research, but not what runs the world’s factories.

The reason is the same closed-loop problem described above. The real-time interfaces on industrial controllers are designed for deterministic, validated automation. They are not designed for the probabilistic, latency-variable outputs of neural networks. Getting a FANUC to accept and faithfully execute high-frequency streaming commands from an AI vision system, without faulting, without compromising safety, and without degrading cycle time, requires solving the real-time control problem completely. Most teams have not solved it.

Until now, the realistic deployment target for physical AI has been the fraction of the market using collaborative robots. The vast majority of industrial capacity has been out of reach. Not because the models were not good enough. Because the loop was never closed.

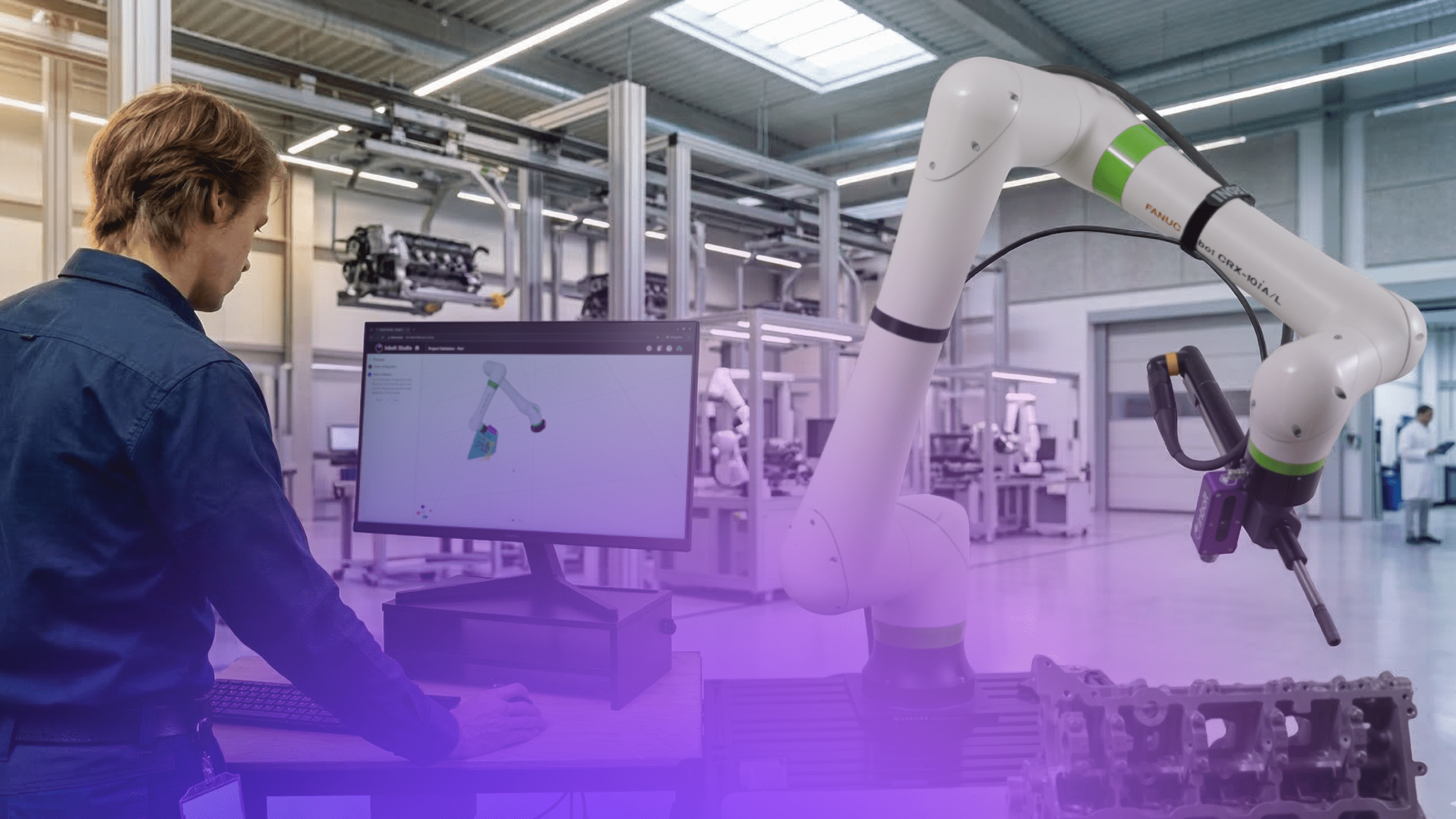

What Inbolt has built

At Inbolt, we set out to solve this problem from first principles. Not for a single robot brand, but for the full landscape of industrial hardware that actually exists in production environments today.

We have developed a high-frequency, robot-agnostic control pipeline that connects our 3D vision and AI models directly into the servo loop of industrial robots, including FANUC, the platform most physical AI teams have left untouched. Our system streams guidance signals at the native control frequency of each platform, with the smoothing, jerk management, and fault-tolerance logic required to operate reliably on a real production line.

The validation is in production. Twenty robots run our guidance layer on a moving assembly line at a top-three global automaker, not a controlled lab, but a live line where the vehicle is in motion during the operation. More than 100 robots across plants in 2 continents handle use cases from body panel loading to precision assembly. These are not pilots. They are production infrastructure.

This means our models do not hand off a trajectory and walk away. They observe, compute, and command continuously, reacting to what the camera sees in real time. If a part shifts during approach, the robot adjusts. If the grasp needs to be corrected mid- motion, it corrects. The intelligence is not a preprocessing step before the robot moves. It is woven into the motion itself.

The result is the closed loop. And with it, the entire class of capabilities that the loop makes possible: adaptive manipulation, real-time fault recovery, robustness to part variance, and deployment on the industrial hardware that factories actually rely on.

The race to physical AI is not won by the team with the most impressive model. It is won by the team that closes the loop: intelligence running inside the machine, at servo frequency, on the hardware that industry already owns and trusts. That is the problem we have spent years solving at Inbolt. Close the loop and physical AI becomes real. Leave it open and it remains a research demo, regardless of how good the underlying models get.

That is the problem we have spent years solving at Inbolt. Close the loop and physical AI becomes real. Leave it open and it remains a research demo, regardless of how good the underlying models get.

Explore more from Inbolt

Access similar articles, use cases, and resources to see how Inbolt drives intelligent automation worldwide.

What bin picking really means in production: from semi-structured to unstructured environments

Why the future of automation is being written by the automotive industry

Reliable 3D Tracking in Any Lighting Condition

The Circular Factory - How Physical AI Is Enabling Sustainable Manufacturing

NVIDIA & UR join forces with Inbolt for intelligent automation

KUKA robots just got eyes: Inbolt integration is here

Albane Dersy named one of “10 women shaping the future of robotics in 2025”